I’ve spent nearly two decades building brand systems at enterprise scale. Global rebrands, template governance, design hubs, asset libraries—the infrastructure that keeps a brand coherent when 50,000 people are using it across numerous countries.

So when I started building Together Better Books—a personalised children’s publishing platform for neurodivergent kids—I expected the illustration challenge to be familiar. Design the assets, create the rules, build the system, enforce consistency.

What I didn’t expect was that training an AI model called a LoRA would teach me something new about how brand systems work. And that the lesson applies far beyond children’s books.

The problem: one illustrator, infinite variations

Together Better Books personalises every story for the child reading it. Their hair, skin tone, body type, clothing. The lead character looks like them. Across eight stories, each with unique creature characters, environments, and props, the number of possible illustration combinations runs into the thousands.

No illustrator can hand-draw every version. The economics collapse immediately. So the question became: how do you scale one artist’s creative vision across thousands of outputs without losing what makes it theirs?

Enter the LoRA

A LoRA—Low-Rank Adaptation—is a way to fine-tune an AI image model on a specific visual style. You feed it 20 to 50 illustrations from a single artist, and the AI learns to generate new images that match their linework, colour palette, and visual personality. The moment I understood this, my brain went straight to brand.

What the illustrator draws vs. what the AI generates

We structured the illustration brief around a simple principle: the illustrator designs the creative vocabulary, and the LoRA learns to speak in it.

The illustrator I’m working with, Lani Greener—another local mum and amazing artist from the Sunny Coast—is responsible for the things that require creative judgment: the modular character system, the unique creature characters, the core environments, the signature props that carry emotional weight. Everything else—personalised child variants, backgrounds at different times of day, recoloured clothing—comes from the LoRA extending her work.

Lani draws each thing once. The AI draws it a thousand different ways, in her style, without drift.

Illustration © Illana Greener

The modularity that makes personalisation possible

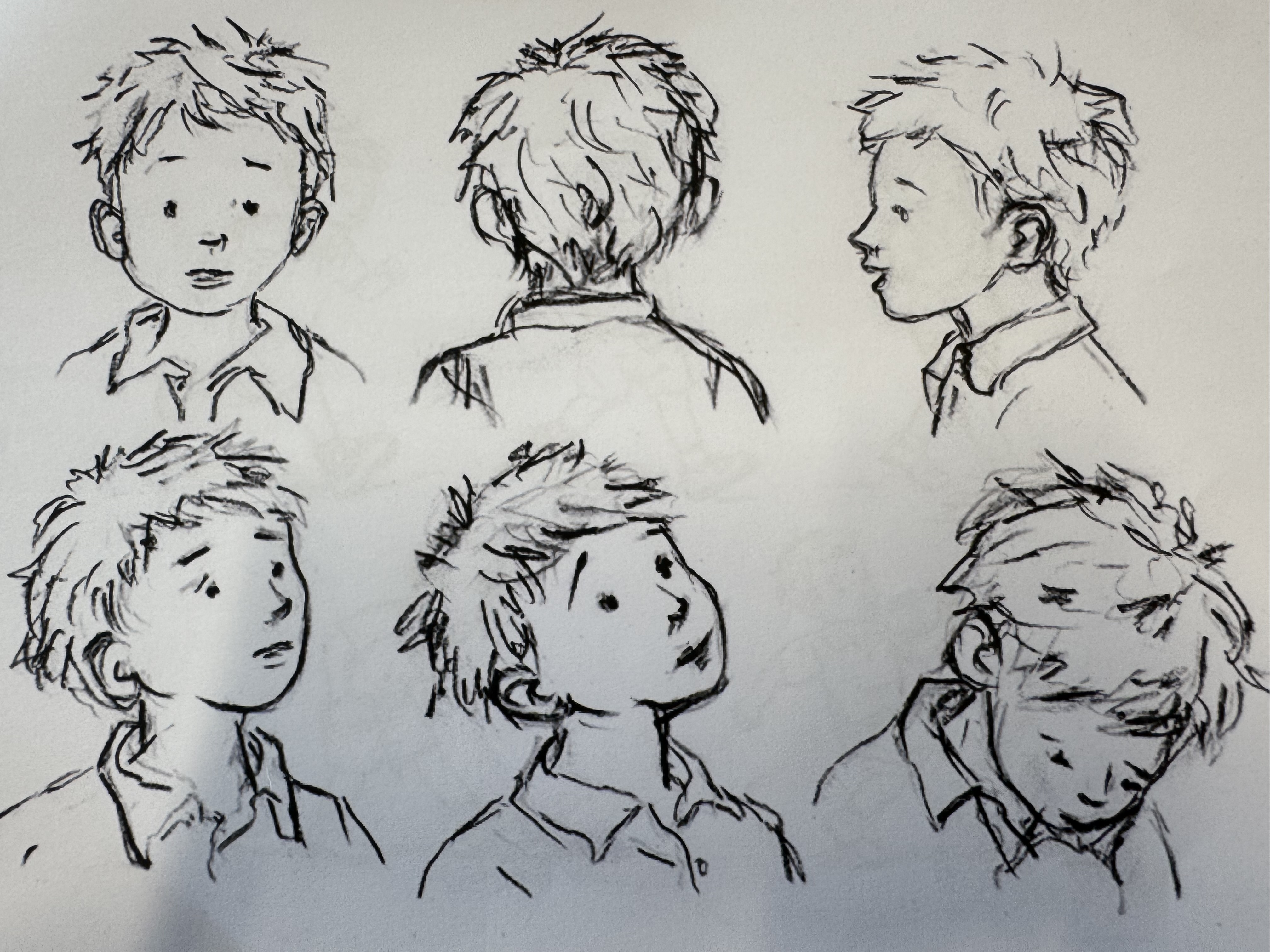

Lani doesn’t draw complete characters. She draws bodies, heads, hairstyles, and expressions as separate components. The AI assembles and recolours them. This is the same principle that makes enterprise brand systems work: the more modular your creative source material, the more effectively an AI can recombine and extend it.

Character expression studies © Illana Greener

Brands that have invested in structured, componentised design systems are already positioned for this. Their asset libraries are training-ready. Brands still working from flat files and one-off productions will need to restructure before they can take advantage. The organisations that built disciplined, modular creative systems over the past decade are the ones that will scale fastest with AI.

The brand systems parallel

Every enterprise brand system follows the same fundamental structure: a small set of hand-crafted, high-judgment decisions—positioning, identity, core visual language—that get extended across a much larger set of lower-judgment applications.

The traditional approach uses template governance, brand guidelines, and approval workflows to manage that extension. It works, but it’s slow, expensive, and fragile. The further you get from the original creative intent, the more the brand drifts. Anyone who’s watched a 200-page brand guideline get interpreted by a regional team with no design training knows this.

A LoRA changes the economics of that extension layer entirely. Instead of writing rules about how to apply your visual style and hoping people follow them, you train a model on the style itself. The rules become embedded in the output. The AI doesn’t interpret guidelines—it generates from the source material directly.

The design team’s role shifts from production to curation and quality control. The volume of on-brand creative output scales dramatically without scaling headcount.

The catch

A LoRA can only remix what it’s seen. It cannot invent. It cannot make the strategic decisions that define a brand’s visual identity in the first place. Templates don’t make creative decisions. Guidelines don’t have taste. The system extends the thinking—it doesn’t replace the thinker.

Creature character design © Illana Greener

What a LoRA does is compress the distance between the creative intent and the final output. In a traditional brand system, that distance is filled with interpretation, approximation, and drift. In a LoRA-enabled system, the AI generates directly from the source, and the distance shrinks to nearly zero.

The part about people

Any conversation about AI-generated imagery eventually arrives at the same question: what happens to the artists? For Together Better Books, the answer is straightforward. Lani’s illustrations are the sole training data. She knows how her work will be used, she can see the outputs, and she has creative oversight. Consent, ongoing compensation, credit, creative oversight, and defined scope—this is how we’re structuring it, and I think it’s how the industry needs to move.

Where this goes

I’m using a LoRA to personalise children’s books. But the same pipeline—illustrator creates source material, LoRA learns the style, AI generates at scale—applies to any brand producing visual content at volume.

The creative source still needs to be excellent. The strategic decisions still need to be human. The brand still needs a point of view worth scaling. And the artist who creates the visual foundation needs to be treated as a partner, with compensation structures that reflect the long-term value of what they’re enabling.

The production layer between “what the brand should look like” and “what the brand actually looks like in the wild”—that layer is about to get very, very thin. For someone who’s spent 18 years trying to keep brands coherent at scale, and who’s now building a publishing platform that depends entirely on one illustrator’s talent being honoured and extended with care—I think we can build that future well.

Ready to scale your brand systems?

We help brands structure design operations for the AI era. Let’s talk about your infrastructure.

Get in touch